Concepts

- How it works

- Secrets Store CSI Driver

- Provider for the Secrets Store CSI Driver

- Security

- Custom Resource Definitions (CRDs)

How it works

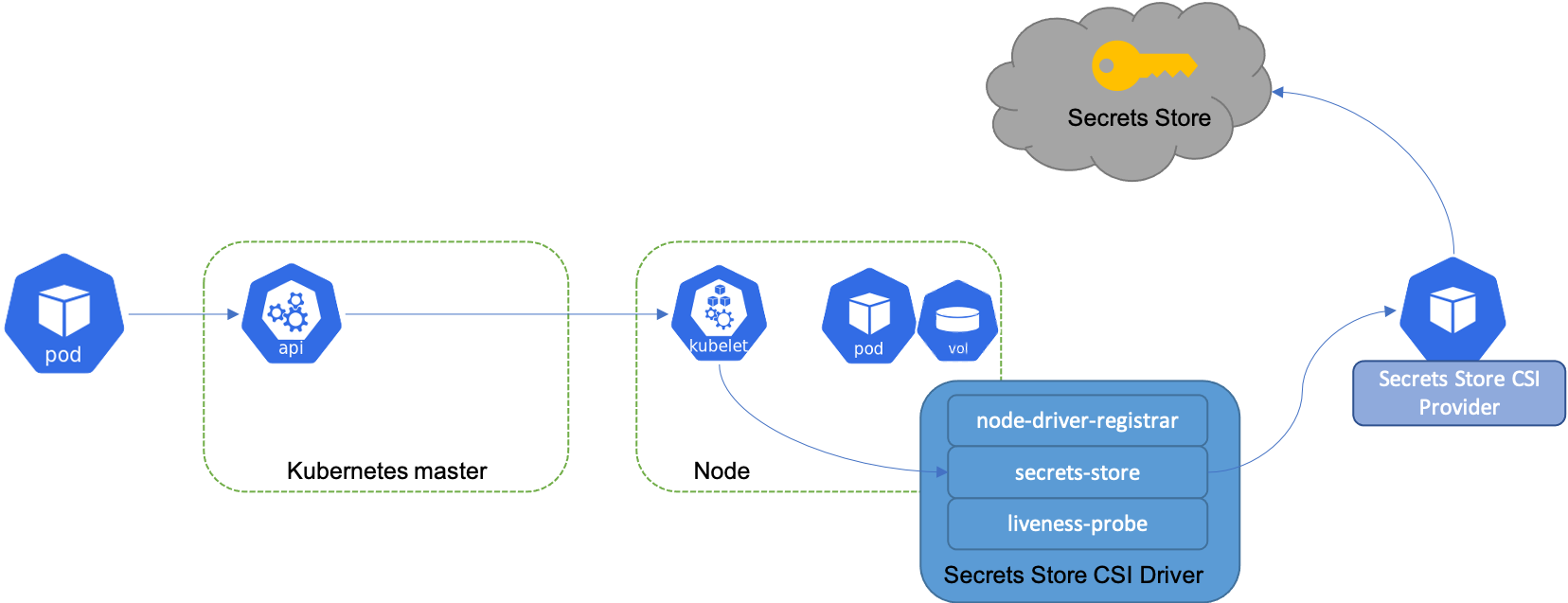

The diagram below illustrates how Secrets Store CSI volume works:

Similar to Kubernetes secrets, on pod start and restart, the Secrets Store CSI driver communicates with the provider using gRPC to retrieve the secret content from the external Secrets Store specified in the SecretProviderClass custom resource. Then the volume is mounted in the pod as tmpfs and the secret contents are written to the volume.

On pod delete, the corresponding volume is cleaned up and deleted.

Secrets Store CSI Driver

The Secrets Store CSI Driver is a daemonset that facilitates communication with every instance of Kubelet. Each driver pod has the following containers:

node-driver-registrar: Responsible for registering the CSI driver with Kubelet so that it knows which unix domain socket to issue the CSI calls on. This sidecar container is provider by the Kubernetes CSI team. See doc for more details.secrets-store: Implements the CSINodeservice gRPC services described in the CSI specification. It’s responsible for mount/unmount the volumes during pod creation/deletion. This component is developed and maintained in this repo.liveness-probe: Responsible for monitoring the health of the CSI driver and reports to Kubernetes. This enables Kubernetes to automatically detect issues with the driver and restart the pod to try and fix the issue. This sidecar container is provider by the Kubernetes CSI team. See doc for more details.

Provider for the Secrets Store CSI Driver

The CSI driver communicates with the provider using gRPC to fetch the mount contents from external Secrets Store. Refer to doc for more details on the how to implement a provider for the driver and criteria for supported providers.

Currently supported providers:

- Akeyless Provider

- AWS Provider

- Azure Provider

- Conjur Provider

- GCP Provider

- OpenBao Provider

- Vault Provider

Security

The Secrets Store CSI Driver daemonset runs as root in a privileged pod. This is because the daemonset is

responsible for creating new tmpfs filesystems and mounting them into existing pod filesystems within the node’s

hostPath. root is necessary for the mount syscall and other filesystem operations and privileged is required for

to use mountPropagation: Bidirectional to modify other running pod’s filesystems.

The provider plugins are also required to run as root (though privileged should not be necessary). This is because

the provider plugin must create a unix domain socket in a hostPath for the driver to connect to.

Further, service account tokens for pods that require secrets may be forwarded from the kubelet process to the driver and then to provider plugins. This allows the provider to impersonate the pod when contacting the external secret API.

Note: On Windows hosts secrets will be written to the node’s filesystem which may be persistent storage. This

contrasts with Linux where a tmpfs is used to try to ensure that secret material is never persisted.

Note: Kubernetes 1.22 introduced a way to configure nodes to

use swap memory, however if this is used then secret

material may be persisted to the node’s disk. To ensure that secrets are not written to persistent disk ensure

failSwapOn is set to true (which is the default).

Security implications of using Secrets Store CSI driver

Anyone who has access to the namespace or its resources can exec and view the secrets. However, this behavior is expected, as entities with such access are expected to perform these operations. One potential solution is to disable exec if a more stringent security posture is required. Alternatively, using distroless images for applications can mitigate this, as exec won’t work. A similar argument can be made for individuals with access to the underlying infrastructure or nodes, who can access the secrets by SSHing into the node. However, typical end users do not have this level of access. If an end user does gain such access, it indicates a compromised infrastructure/cluster, and the recommended solution is to restrict access to the cluster/infrastructure.

Encrypting mounted content can be the solution to further protect the secrets. However, this introduces additional operational overhead, such as managing encryption keys and addressing key rotation. Key management becomes a crucial aspect similar to secrets management.

When we look from the perspective of an application and what access does it have, for instance, the Ingress Controller application which requires cluster-wide access to Kubernetes Secrets. If a component like Ingress is compromised, it could jeopardize all secrets in the cluster. This is where the Secrets Store CSI driver proves valuable, as it can mount/sync only the necessary TLS certificates on the Ingress Pod, reducing the blast radius.

Custom Resource Definitions (CRDs)

SecretProviderClass

The SecretProviderClass is a namespaced resource in Secrets Store CSI Driver that is used to provide driver configurations and provider-specific parameters to the CSI driver.

SecretProviderClass custom resource should have the following components:

apiVersion: secrets-store.csi.x-k8s.io/v1

kind: SecretProviderClass

metadata:

name: my-provider

spec:

provider: vault # accepted provider options: akeyless or azure or vault or gcp

parameters: # provider-specific parameters

Refer to the provider docs for required provider specific parameters.

Here is an example of a SecretProviderClass resource:

apiVersion: secrets-store.csi.x-k8s.io/v1

kind: SecretProviderClass

metadata:

name: my-provider

namespace: default

spec:

provider: azure

parameters:

usePodIdentity: "false"

useManagedIdentity: "false"

keyvaultName: "$KEYVAULT_NAME"

objects: |

array:

- |

objectName: $SECRET_NAME

objectType: secret

objectVersion: $SECRET_VERSION

- |

objectName: $KEY_NAME

objectType: key

objectVersion: $KEY_VERSION

tenantId: "$TENANT_ID"

Reference the SecretProviderClass in the pod volumes when using the CSI driver:

volumes:

- name: secrets-store-inline

csi:

driver: secrets-store.csi.k8s.io

readOnly: true

volumeAttributes:

secretProviderClass: "my-provider"

NOTE: The

SecretProviderClassneeds to be created in the same namespace as the pod.

SecretProviderClassPodStatus

The SecretProviderClassPodStatus is a namespaced resource in Secrets Store CSI Driver that is created by the CSI driver to track the binding between a pod and SecretProviderClass. The SecretProviderClassPodStatus contains details about the current object versions that have been loaded in the pod mount.

The SecretProviderClassPodStatus is created by the CSI driver in the same namespace as the pod and SecretProviderClass with the name <pod name>-<namespace>-<secretproviderclass name>.

Here is an example of a SecretProviderClassPodStatus resource:

apiVersion: secrets-store.csi.x-k8s.io/v1

kind: SecretProviderClassPodStatus

metadata:

creationTimestamp: "2021-01-21T19:20:11Z"

generation: 1

labels:

internal.secrets-store.csi.k8s.io/node-name: kind-control-plane

manager: secrets-store-csi

operation: Update

time: "2021-01-21T19:20:11Z"

name: nginx-secrets-store-inline-crd-dev-azure-spc

namespace: dev

ownerReferences:

- apiVersion: v1

kind: Pod

name: nginx-secrets-store-inline-crd

uid: 10f3e31c-d20b-4e46-921a-39e4cace6db2

resourceVersion: "1638459"

selfLink: /apis/secrets-store.csi.x-k8s.io/v1/namespaces/dev/secretproviderclasspodstatuses/nginx-secrets-store-inline-crd

uid: 1d078ad7-c363-4147-a7e1-234d4b9e0d53

status:

mounted: true

objects:

- id: secret/secret1

version: c55925c29c6743dcb9bb4bf091be03b0

- id: secret/secret2

version: 7521273d0e6e427dbda34e033558027a

podName: nginx-secrets-store-inline-crd

secretProviderClassName: azure-spc

targetPath: /var/lib/kubelet/pods/10f3e31c-d20b-4e46-921a-39e4cace6db2/volumes/kubernetes.io~csi/secrets-store-inline/mount

The pod for which the SecretProviderClassPodStatus was created is set as owner. When the pod is deleted, the SecretProviderClassPodStatus resources associated with the pod get automatically deleted.